Robust cybersecurity defense augments reliable prevention and protection technology with EDR. Winning with EDR is about knowing your network and maturing your security staff.

In spite of MITRE Engenuity’s clear guidance regarding the ATT&CK® Evaluation methodology and interpretation of the results – specifically, the part that says the “evaluations are not a competitive analysis” and that “there are no scores or winners” – a handful of participating vendors have already released boastful marketing materials claiming that they beat their competition.

While the motivations for such marketing bravado are understandable, they miss the point of the ATT&CK Evaluations. Forrester analysts explain this phenomenon in their blogpost “Winning” MITRE ATT&CK, Losing Sight Of Customers.

The objective of this blogpost is to provide a down-to-earth factual overview of how ESET’s endpoint detection and response (EDR) solution – ESET Enterprise Inspector – performed in the evaluation, and to highlight some characteristics and features of our solution that were not evaluated in MITRE Engenuity’s evaluation but may be relevant when considering your organization’s overall needs.

Before diving in, it is important to note that we are not writing this from the perspective of a vendor that performed poorly in the evaluation. On the contrary, with a visibility more than 90% into the attack sub-steps (one of the metrics, which will be explained in more detail below), ESET places in the top ranks of participating vendors – and that’s the one and only comparative statement for this article, solely to illustrate the aforementioned point. So, let’s move beyond the hype and look at the results.

Evaluation methodology

In order to analyze the evaluation results properly, it’s important to understand the methodology and a few key terms. MITRE Engenuity provides very clear and detailed documentation for the evaluation, starting with an easy-to-read blogpost (which is a great place to start, particularly if you’re not familiar with the evaluation in this most recent round) all the way to the Adversary Emulation Plan Library, which includes source code so that anyone can reproduce the results. Therefore, we’ll only provide a very brief overview of the methodology here.

In this most recent iteration, the evaluation emulated the techniques typically used by the Carbanak and FIN7 advanced persistent threat (APT) groups. We call these “financial APT groups,” because unlike most APT groups, their primary motivation appears to be financial gain, rather than nation-state espionage or cybersabotage, and unlike typical cybercriminal gangs, they utilize sophisticated techniques.

This means that if your organization is in one of the financial, banking, retail, restaurant, or hospitality sector (or, in general, sectors that employ point-of-sale terminals, as these devices are targeted by these threat actors), this evaluation should be particularly pertinent to you. At the same time, for organizations outside the typical scope of Carbanak and FIN7, the evaluation still serves as a relevant reference to demonstrate EDR efficacy, because many of the emulated techniques used in this evaluation are common to multiple APT groups.

The detection scenarios consisted of 20 steps (10 for Carbanak and 10 for FIN7) spanning a spectrum of tactics listed in the ATT&CK framework, from initial access to lateral movement, collection, exfiltration, and so on. These steps are then broken down to a more granular level – a total of 162 sub-steps (or 174 for vendors also participating in the Linux part of the evaluation). The MITRE Engenuity team recorded the responses and level of visibility at each sub-step for each participating EDR solution.

The results were then combined into various metrics, essentially based on the solution’s capability to see the behaviors of the emulated attack (Telemetry category) or to provide more detailed analytical data (General, Tactic, and Technique categories). For more details, read MITRE Engenuity’s documentation on detection categories.

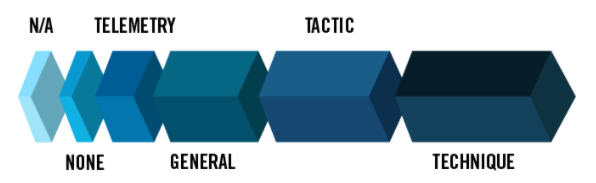

Figure 1 – Detection categories in the Carbanak and FIN7 Evaluation (Image source: MITRE)

New to this year’s round, in addition to the detection scenarios in which tested solutions were configured only to report but not prevent an attack from proceeding, there was also an optional protection scenario that tested each participating solution’s capabilities to block an attack from continuing. The protection scenario consisted of a total of 10 test cases (5 for Carbanak and 5 for FIN7).

As mentioned in the introduction, the ATT&CK Evaluations are different from traditional security software testing in that there are no scores, rankings, or ratings. The reasoning behind this is that organizations, security operations center (SOC) teams, and security engineers all have different levels of maturity and different regulations to comply with, along with a host of other sector-, company-, and site-specific needs. Hence, not all the metrics given in the ATT&CK Evaluations have the same level of importance to each evaluator. Other key parameters that the evaluation did not consider include EDR performance and resource requirements, noisiness (alert fatigue – any product could obtain a very high score on most of these results by producing alerts on every action recorded in the test environment), integration with endpoint security software, and ease of use.

Now, let’s take a look at how ESET’s EDR solution, ESET Enterprise Inspector, fared.

ESET’s evaluation results

The results are publicly available here.

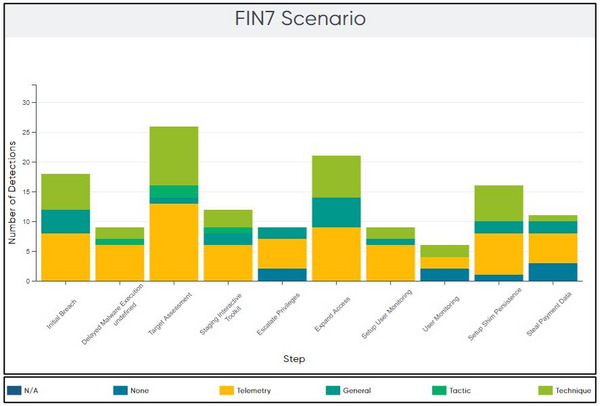

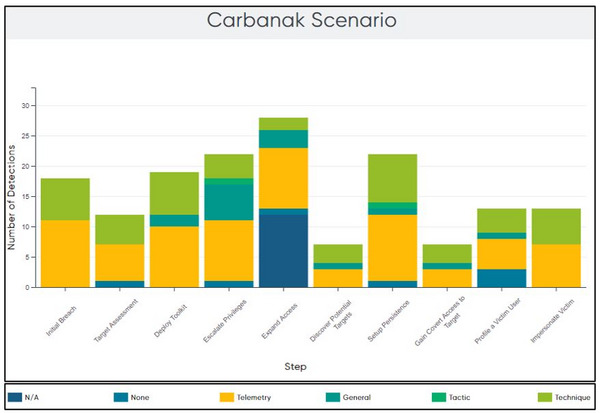

Out of the 20 steps in the detection evaluation, ESET Enterprise Inspector detected all steps (100%). Figures 2 and 3 illustrate the different types of detection per step.

Figure 2 – Distribution of detection type by step in the Carbanak scenario (Image source: MITRE Engenuity)

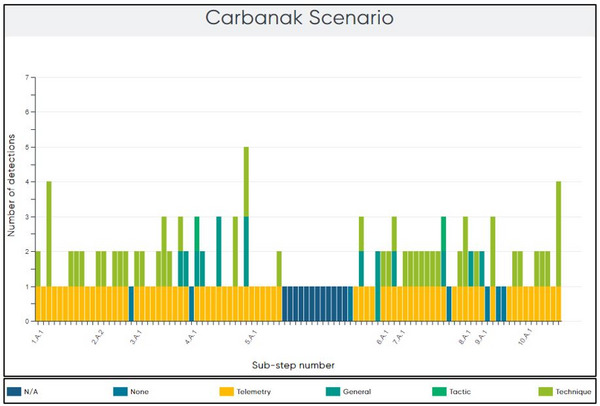

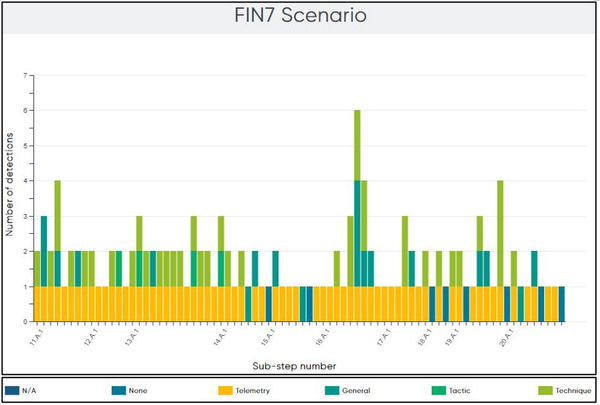

Breaking the attack emulation down to a more granular level, out of the 162 sub-steps in the emulation, ESET Enterprise Inspector detected 147 sub-steps (91%). Figures 4 and 5 illustrate the different types of detection per sub-step.

Figure 4 – Distribution of detection type by sub-step in the Carbanak scenario (Image source: MITRE Engenuity)

Figure 5 – Distribution of detection type by sub-step in the FIN7 scenario (Image source: MITRE Engenuity)

As the results indicate, ESET’s EDR solution provides defenders excellent visibility of the attacker’s actions on the compromised system throughout all attack stages.

While visibility is one of the most important metrics, it is not the only important one. Perhaps even more important for some SOC analysts is the alerting strategy (not part of the evaluation itself), which we discuss later.

Another metric that may be important for some is analytic coverage – comprised of detections that provide additional context – for example, why the attacker executed the specific action on the system. Illustrated by the three shades of green (General, Tactic, and Technique) in the graphs above, ESET Enterprise Inspector provided this extra information for 93 of the sub-steps (57%).

Note that ESET did not participate in the Linux part of the evaluation – the Linux sub-steps are marked in the results as N/A. ESET Enterprise Inspector is currently available for Windows and macOS (macOS protection was not tested in this evaluation round), while the integration for Linux is expected in late 2021.

ESET did participate in the optional protection evaluation, with ESET Endpoint Security automatically intervening in 9 out of 10 test scenarios.

Why we didn’t achieve 100% coverage

Linux detections aside (note that although ESET’s EDR solution for Linux was not available at the time of this evaluation round, ESET’s ecosystem does provide endpoint protection for Linux – but this was outside the scope of this evaluation), ESET Enterprise Inspector did not identify 15 out of the 162 sub-steps. These “misses” fall into one of two categories:

- Reporting on some events from specific data sources is intentionally disabled to reduce noise within the dashboard.

- The data source(s) for the detections without visibility have not yet been implemented, but additions are planned for upcoming versions.

Examples of detections that we have yet to implement are pass the hash (sub-step 5.C.1), command and control connection to a proxy (sub-step 19.A.3), and monitoring additional APIs (e.g., sub-steps 4.A.1, 9.A.2, 9.A.4, and 9.A.5). We consider the latter a higher priority and are planning to add these detections in the next release of ESET Enterprise Inspector.

The reason why we decided not to implement some API monitoring functionality is due to the enormous number of API calls present in the system – monitoring all of them is neither feasible nor desirable. Instead, it is important to carefully select only those that are useful for the SOC analyst and that do not produce unnecessary noise.

An example of the second category, misses by choice, is file read operations (e.g., parts of sub-steps 2.B.5, 9.A.5, and 20.B.5). Again, due to the huge number of such operations in the system, logging all of them is not feasible as this would be an enormous hog on resources. Instead, we opt for a much more efficient route: monitoring file read operations[1] of carefully selected key files (for example, files that store browser login credentials), while also monitoring network traffic for the corresponding process.

The key principle when designing an effective EDR solution (and this applies to endpoint security software as well) is balance. In theory, it’s easy to create a solution that achieves 100% detections – simply detect everything. Of course, such a solution would be next to useless, and is precisely the reason why traditional endpoint protection tests have always included a metric for false positives and a true comparative test cannot be done without testing for false positives.

Yes, the situation is a bit different with EDR compared to endpoint protection (because you can monitor or detect without alerting) but the principles still apply: too many detections create too much noise, leading to alert fatigue. This causes an increased workload for SOC analysts, who have to sift through a large amount of detections or alerts, leading to the exact opposite of the desired effect: it would distract from genuine high-severity alerts. In addition to the increased human workload, too many lower importance detections also increase costs due to higher performance and data storage requirements.

Note that this comment discusses file read operations in particular, which are the most numerous. In addition to file read operations, ESET Enterprise Inspector also provides visibility into other important file system operations, such as writing, renaming, and deleting files, which are not as numerous, so there are fewer restrictions on which files to monitor.

Having said that, good visibility is an important metric for an EDR solution (ESET’s evaluation result was over 90%); it’s just not the only important metric to consider.

ESET Enterprise Inspector’s alerting strategy

In our opinion, the key purpose – and most important feature – of a good EDR solution is its ability to spot an ongoing attack and assist the defender in reacting, mitigating, and investigating it.

As already explained, one of the main issues an ineffective EDR solution can present for a SOC analyst is alert fatigue. The way an effective EDR solution addresses this issue – in addition to prioritizing data sources, as highlighted in the previous section – is a good alerting strategy. In other words, an EDR solution should present all of the monitored activity within the system(s) to SOC analysts in a way that draws their focus to likely indicators of an attack.

Due to each vendor’s unique take on alerting strategy and the understandable difficulty in comparing them, this important aspect was not a direct part of the evaluation. Instead, the alerting strategy is summarized by MITRE in the overview section of each vendor’s results.

For ESET Enterprise Inspector, the alerting strategy is described by MITRE Engenuity as follows: “Events that match analytic logic for malicious behaviors are assigned appropriate severity values (Informational, Warning, Threat). These alerted events are also enriched with contextual information, such as a description and potential mappings to related ATT&CK Tactics and Techniques. Alerted events are aggregated into specific views as well as highlighted (with specific icons and colors) when included as part of other views of system events/data.”

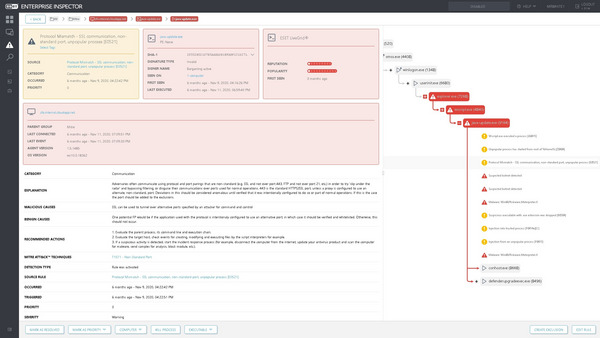

As an example, Figure 6 shows the detailed view on an alert raised following the emulation of sub-step 8.A.3.

In this step, the attacker sets up covert access to the target via an HTTPS reverse shell.

Figure 6 – ESET Enterprise Inspector event details triggering Protocol Mismatch rule

ESET Enterprise Inspector detected the creation of this SSL tunnel and collected additional contextual information useful for the SOC analyst, including details on the protocol mismatch and non-standard port usage, and the execution chain and process tree – highlighting further related events that were suspicious or clearly malicious.

An explanation of the observed behavior is provided, along with a link to the MITRE ATT&CK knowledge base, and the typical causes for this type of behavior, both malicious and benign. This is especially helpful in ambiguous cases where potentially dangerous events are used for legitimate purposes due to the organization’s specific internal processes, which are for the SOC analyst to investigate and distinguish.

Recommended actions are also provided, as well as tools to mitigate the threat by actions such as terminating the process or isolating the host, which may be done within ESET Enterprise Inspector.

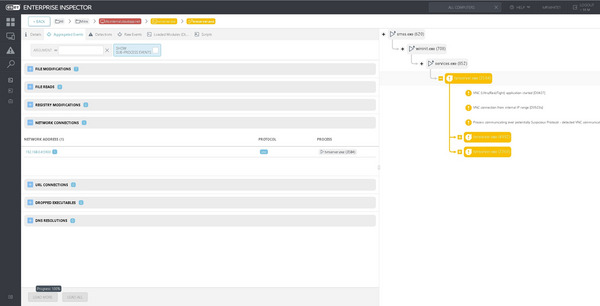

Figure 7 – ESET Enterprise Inspector event details for VNC communication

In the last sub-step of the Carbanak emulation scenario (10.B.1), the adversary attempts to access the target’s desktop via VNC. Again, this communication is clearly visible to ESET Enterprise Inspector and flagged as suspicious, with all relevant details provided – see Figure 7 above. Note that the protocol is identified based on analysis of the network traffic content, meaning that even if non-standard network ports are used the protocol will be identified, and even if the originating process is obfuscated or masquerading techniques are employed, the corresponding rules in ESET Enterprise Inspector can be triggered.

Going back to the purpose of a good EDR solution as outlined in the beginning of this section, the essential role is not necessarily to alert the analyst to every single procedure carried out during an attack (or sub-step in the ATT&CK Evaluation), but rather to alert them that an attack took place (or is ongoing) ... and afterward assist them in investigating it by providing the capability to navigate transparently through detailed and logically structured evidence of what happened in the environment and when. This is a functionality that we continue to put great emphasis on in developing ESET Enterprise Inspector.

Conclusion

We are happy to see that the rigorous MITRE ATT&CK Evaluation demonstrated the qualities of our EDR technology and validated the vision and roadmap we have for ESET Enterprise Inspector looking forwards.

It’s important to keep in mind that the development of a good EDR solution cannot be a static undertaking – as adversary groups change and improve their techniques, so must EDR and endpoint protection platforms keep pace in order to continue protecting organizations from real-world threats.

And that’s exactly the case with ESET Enterprise Inspector: it is not an EDR solution whose development is disconnected from active threat research. No, it’s our experts who track the world’s most dangerous APT groups and cybercriminals who also ensure ESET Enterprise Inspector’s rules are effective and capable of detecting malicious activity on targeted systems.

ESET Enterprise Inspector is just one of the components in our comprehensive cybersecurity portfolio, perfectly balanced to deliver reliable protection against cyberattacks. ESET Enterprise Inspector is an integral part of ESET’s multi-layered security ecosystem, which includes strong and accurate endpoint security, cloud sandboxing (ESET Dynamic Threat Defense), machine learning-based detection technologies, and LiveGrid® telemetry and threat intelligence coming from a user base of millions of endpoints (which, among other benefits, allows ESET Enterprise Inspector to factor into its decisions the reputation of binaries and processes).

We at ESET believe this unified approach to delivering security solutions is absolutely crucial, because while it’s important to have great visibility into an attack that executed on your network, it is much more important to be able to spot and recognize it among a myriad of events, even better, to prevent it from happening at all.

We encourage readers to consider their own needs, requirements, and preferences, and then dive into the publicly available ATT&CK Evaluation results, and other resources.